Image caching is an important part of the mobile application, it can improve the performance and user experience of the app. Caching images locally on the device can help to reduce the time it takes to load images and make the app feel more responsive. In addition, caching can reduce data usage and save on battery life by avoiding the need to download the same image multiple times.

Our applications are image-heavy, so metrics like network traffic consumption and responsiveness while loading images are very important for us. Properly optimized image caching strategy helped us to reduce the network traffic by 78% and the median response time for image loading network requests by 56%, but more about that in the next chapters!

In this post we’ll walk you through different caching strategies we can employ in mobile apps, and share the evolution of the image caching in our applications.

This is the first article in the series. In the next article we’ll share the implementation details of the chosen image caching strategy, and code snippets to integrate the mechanism with popular image loading libraries for Android.

Context: all given examples relate to Android, but all strategies are applicable for iOS applications as well.

Caching

Benefits

There are several benefits of using the effectively-implemented caching to both content consumers (mobile app users in our case) and content providers:

- saving network cost: caching helps to avoid downloading the same image multiple times, which can save on data usage and reduce the amount of data transferred over the network;

- reducing latency / improving responsiveness: caching images locally on the device can reduce the time it takes to load the images and make the app feel more responsive;

- providing offline usage: caching can also enable offline access to images, allowing the user to view the images even when they are not connected to the internet. This can be especially useful for apps that are used in areas with poor or unreliable network coverage;

- improving battery life: by avoiding the need to download the same images repeatedly, caching can help to save on battery life by reducing the amount of time the device’s network connection is active.

Coherence problem

The cache is, by definition, not the source of truth for the data. The cache coherence problem arises when there is a potential for conflicting updates to the same data, or when the cached data becomes stale and no longer reflects the current state of the data. Solving the cache consistency problem is crucial for ensuring the correctness and integrity of the cached data. As Phil Karlton famously said, “There are only two hard things in computer science: cache invalidation and naming things.”

Various invalidation strategies and protocols have been developed to address this problem. These approaches aim to ensure that the cached data remains consistent with the data source, and that conflicting updates are resolved in a way that preserves the integrity of the data.

Image caching requirements

The requirements for image caching in mobile applications will depend on the specific needs and goals of the app, but we can define some common requirements:

- reduction of the app network consumption, which can be pretty expensive for mobile network;

- fast access / immediacy: cached images should be easy and fast to access, so that they can be loaded and displayed quickly when needed by the app;

- persistence: The cached images should be persistent, so that they can be accessed even if the app is closed or the device is restarted;

- eviction policy: caching mechanism should have a strategy for deciding which images to keep in the cache and which to evict when space is needed. This might be based on factors such as the frequency of access, the age of the image, or the available space in the cache;

- consistency: caching strategy should be able to invalidate or remove outdated or incorrect images from the cache, so that the app always has access to the most up-to-date images.

Image loading library cache

All image loading libraries provide some default caching mechanism, but in most case it’s a simple fixed size disk cache with LRU (Least Recently Used) eviction by default:

- Glide uses up to 250 mb;

- Fresco uses up to 40 mb;

- Coil uses up to 2% of the available disk space;

- Lottie uses disk cache without the eviction strategy at all;

- Picasso is the only exception here: it uses the OkHttp cache (which implements HTTP caching) with 2% of the available disk space size.

This is the simplest strategy with the lowest network traffic consumption, because once the image is cached, it will stay cached until it’s evicted by the LRU strategy, and this won’t happen soon (or never in case of Lottie). This strategy also offers immediacy, because cached images will be displayed immediately without any invalidation. But the cache consistency is very low in this case, there always be a lot of stale entries in the cache.

This strategy should only be used if the image data source is mutated rarely.

Periodic invalidation

Cache consistency of the previous strategy could be improved by introducing periodic invalidation. Periodic invalidation is a strategy in which the cached data is periodically checked for validity and updated or removed if it is no longer accurate or relevant. This approach involves setting a specific time interval, known as the invalidation interval, after which the cached data is checked to ensure that it is still valid or just invalidated unconditionally.

This strategy could be introduced by using one of the following methods:

- clearing the whole cache regularly (e.g. once per day);

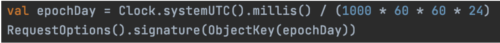

- using some temporal object (e.g. epoch day) as a part of the cache key.

Here’s the brief example of the implementation for Glide:

Periodic cache invalidation can help to ensure that the cached data remains accurate and up-to-date with the eventual consistency – entries are stale only up to 24h in this example. However, it also results in increased network traffic and overhead, as the cache must be regularly invalidated. Immediacy also suffers in this case, because requested images will have to be downloaded from the image service once per invalidation interval. As a result, periodic cache invalidation is most commonly used in situations where the cost of increased network traffic is deemed acceptable in exchange for the benefits of having accurate and up-to-date data.

External cache invalidation

External cache invalidation is a caching strategy in which the cached data is invalidated or updated based on signals or events that occur outside of the cache itself by an external actor. This approach involves setting up mechanisms or triggers that can detect when the data in the cache has become stale or outdated, and initiate the process of invalidating or updating the cache in response.

One common way to implement external cache invalidation in mobile apps is by using a messaging or notification system, such as a publish/subscribe model, to send invalidation messages to the cache whenever the data is updated on the server. The cache can then respond to these messages by invalidating the affected data and replacing it with the latest version from the server.

External cache invalidation can help to ensure that the cached data remains accurate and up-to-date, and that the app always has access to the most current information. It can also be more efficient than periodic cache invalidation, as it only invalidates the data that has actually changed, rather than checking the entire cache on a fixed interval.

Unfortunately this strategy is usually not applicable for mobile apps due to a huge limitation – there’s no reliable way to pass the invalidation event to the mobile app, because all approaches are either unreliable, drain the device battery or have a huge latency.

URL params

Another way to implement image caching is to use the URL parameters to store the image version identifier. This approach involves using additional URL parameters of the image that is being cached, such as a timestamp or version number. The app can then use these parameters to determine whether the cached version of the resource is still valid and up-to-date, or whether it needs to be refreshed from the server.

Most image loading libraries use the whole image URL as a cache key, so adding an URL parameter should invalidate the cache entry (or rather treat the image as a new one). So if all image URLs are generated on the backend side, and the backend is able to generate proper version identifiers for all images, this strategy guarantees the lowest network traffic consumption and always consistent cache. For example, backend can generate image version parameters as follows:

Unfortunately most systems (especially legacy ones) are not designed this way, and image URLs are often constructed on the client side, e.g.

In this scenario the approach is not suitable, and other caching strategies are more appropriate.

HTTP cache

At this point one can ask, why can’t we use the same caching strategy as Web. So let’s do a dive into the standard HTTP cache.

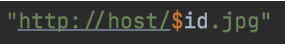

Let me briefly remind you how the HTTP cache works. Attached scheme is simplified and doesn’t cover all HTTP cache control directives, but still gives us enough understanding of the whole caching process.

The scheme depicts following cases:

- The user wants to see the `/image.jpg`, and the HTTP client cache is empty, so the client sends this request to the server. Server responds with the 200 OK status code and the requested image, with 2 additional response headers:

- `max-age=120` – means that the response should be considered fresh for the period of 120 seconds, and the client can use the cached response without the need to invalidate it

- `Etag: “1111”` – this is the part which allows the client to make conditional requests. The value of this ETag is the unique identifier of the specific version of the resource found at the requested URL assigned by the HTTP server. Once the resource changes, the server should assign a new ETag. Common examples of ETag generation include hashing the resource content, using the timestamp of the last resource modification, or even just a revision number.

After retrieving the response, the client stores the response body along with response headers in the cache, and responds to the user with the retrieved image.

- After 60 seconds the user wants to see the same image, so the client checks it can use the cached image instead of making a network request, and because the image is still fresh, there’s no need to perform any additional actions, the user will get the cached image momentarily.

- After another 120 seconds the user wants to see the same image again, and because the cached image is already expired (stale), the client needs to perform the conditional request to check if the image on the server is the same. So in order to perform this conditional request, the client adds the `If-None-Match: 1111` header with the ETag of the cached image. Upon getting this request, the server checks if the current representation of the resource matches the ETag, and if so, it responds with the `304 Not Modified` response with an empty body. This response signals the client to use the cached resource, because it’s still valid. After receiving the `304 Not Modified` response the client responds to the user with the cached image.

- The same use case as the previous one, but the actual resource on the server has been changed. So the server has to use the `200 OK` response with the updated image as response body, and new `max-age` and `ETag` values. After receiving this response, the client needs to invalidate the cache it has and responds to the user with the updated image.

In order to use this caching strategy with our image loading library of choice we just need to disable the internal library cache mechanism and set up the network fetcher based on the HTTP client which supports the HTTP cache control (including OkHttp, Volley or Cronet).

The only issue with this strategy is that it was designed for low latency networks, because the conditional invalidation network request is synchronous in this scheme (see the step 3). With a potentially unstable mobile network, this can lead to unpredictable delays before displaying the image.

So what if we can use the same caching strategy, but somehow make the invalidation request asynchronous instead? And it’s definitely possible, so please welcome the `Stale-while-revalidate` strategy!

Stale-while-revalidate

12 years ago the RFC “HTTP Cache-Control Extensions for Stale Content” was proposed, and since then its implementation has already made its way to all major browsers. This RFC defines the extension which allows the client to immediately return the stale response while it revalidates it in the background, effectively hiding latency from users.

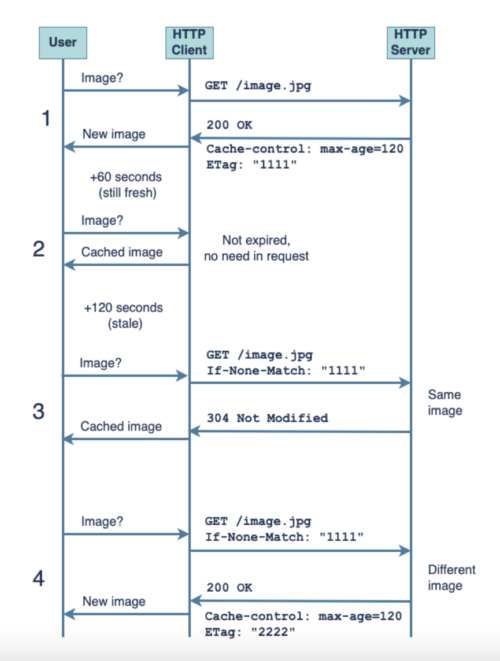

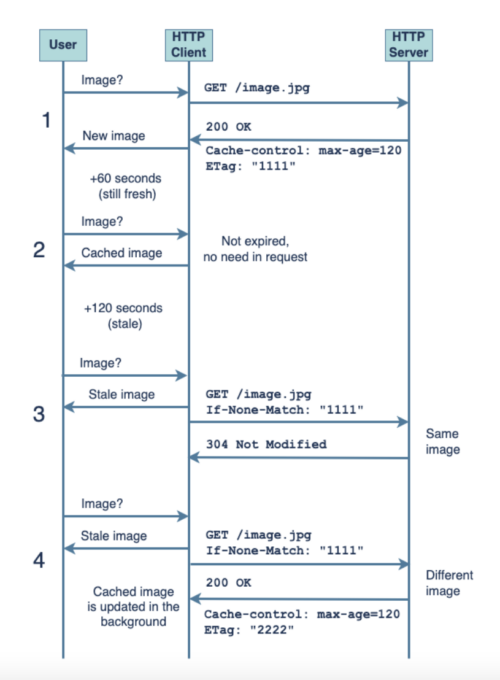

Let’s check the difference between the standard HTTP cache control mechanism and the `stale-while-revalidate`:

Cases 1 and 2 are the same here, so let’s dive deeper into cases 3 and 4:

- The user wants to see the same image after 120+ seconds have passed, making the cached response stale. Instead of performing the synchronous invalidation request, the client immediately returns the stale image from the cache, so the user can see it, and initiates the background invalidation request (which is exactly the same as with the standard HTTP cache). After the server responds with the “304 Not Modified” response, the client marks the response as fresh again, and nothing else happens.

- If the actual image on the server has been changed, then after the background asynchronous invalidation request returns the updated image, the client updates the cache (also in background), so next time the user wants to see the image, the client will get the updated image.

Although this strategy offers only eventual cache consistency, it’s still good enough for most use cases we have in mobile applications. Updated image will be observed by the user eventually, and eventually in this case usually means on the next request.

Unfortunately as this caching strategy is still not a part of the HTTP cache control standard, it’s supported by all major web browsers, but not by OkHttp. So introducing this strategy to the mobile app requires some additional effort.

Our results

As we’ve mentioned before, our applications in Delivery Hero are image-heavy, and network traffic consumption and image loading responsiveness are very important for us.

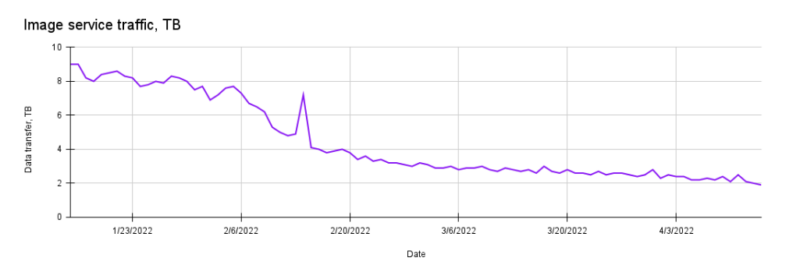

Until recently we employed the periodic (daily) image cache invalidation in our project. Eventual consistency up to 1 day was good enough for most of our use cases, but the image service network consumption was pretty high – around 9TB per day in one of the countries. So we decided to introduce the `stale-while-revalidate` strategy using the custom OkHttp interceptor.

As a result of the new image caching strategy, after the client cache saturation, our daily image service network traffic was reduced to around 2TB:

Also once the client cache is saturated, image service will respond with `304 Not Modified` status code and empty response body for most requests, the caching decreases the response time. In our case the median response time for image loading network requests was reduced from 121ms to 53ms (-56%), which has a potentially big positive impact on the app responsiveness.

Further readings

- RFC 5861: HTTP Cache-Control Extensions for Stale Content

- HTTP Cache

- UX Patterns: Stale-While-Revalidate

- Keeping things fresh with stale-while-revalidate

If you like what you’ve read and you’re someone who wants to work on open, interesting projects in a caring environment, check out our full list of open roles here – from Backend to Frontend and everything in between. We’d love to have you on board for an amazing journey ahead.